re alI’m on my way home from KubeCon. Regretfully, I had to leave early but I still got to attend the co-located events, the PlatformEngineering Executive Roundtable , and most importantly, chat with many smart folks from the community about the state of Cloud Native, AI, and Platform Engineering. Here are my conclusions from these valuable conversations

AI Coding Isn’t Delivering on its Promises (or Threats)

Yes, we all are generating incomparably more code. Everybody is running their Spec-Driven Development and Ralph Loop experiments, and new tools are being built daily. My good friend Engin Diri just released a tool to generate configs for all the major AI coding platforms out there in one shot: https://github.com/dirien/yet-another-agent-harness. But companies aren’t getting significantly more productive because of that (yet?). Existing software doesn't seem to evolve faster. Over all, the confusion around what to build is even bigger than it used to be.

And it looks like the job market slowness is more about that, not engineers being replaced by AI, but rather entrepreneurs and investors having no clarity in what software will be needed a year from now. E.g: do we really need another SRE AI agent?!

Moreover, the question of software quality is everywhere. How do we review all the slop code? Are humans even able to review it? And if not, can we trust AI to review AI code?

And when talking about quality guardrails - what does an AI-Native SDLC even look like?

A lot of questions. Not too many good answers.

Mind it - I’m not saying that AI coding is going anywhere. It’s totally clear this is the way we’re going to code. It’s just still not so clear what that means for us as professionals, as an industry.

AI and Cloud Native - We Need More!

One thing that’s clear - we’re gonna need more infrastructure. Training, fine-tuning, serving, prefilling, encoding, decoding - they all have their infrastructure requirements, orchestration, and resource allocation needs. And we’re already running them on cloud native infra that’s been battle-tested. It just doesn’t always have the specific controls that AI workloads need. Therefore, DRA gets ever more relevant, new scheduling patterns evolve, and AI-native networking becomes a thing

I believe this is good news. For cloud providers who are already selling more of them costly GPU/TPU/NPU instances than ever. But also for cloud infrastructure professionals - all this infrastructure isn’t going to deploy itself, support itself, troubleshoot itself. But what about autonomous DevOps agents that we are all waiting for? - You ask. Which brings us to the topic of trust.

We Don’t Trust A

The excitement around AI is tremendous, but the trust is low. As the poll results at our latest webinar showed, less than 5% of all organizations allow AI agents anywhere near their production environment. At KubeCon, one could hear the same sentiment at all the booths promoting agentic SRE solutions. In order to actually have autonomous infrastructure , significant investment in guardrails is required. That’s why there was so much interest around AI Native networking - specifically the agentgateway project that was contributed to the Linux Foundation in August last year. Sandboxing - i.e running AI agents in strictly confined environments that allow executing untrusted LLM-generated code and commands without granting access to sensitive information or controls - is also drawing a lot of attention. In order to make sandboxing available on Kubernetes, the Agent Sandbox project is being built by sig-apps.

So once again - we do want more AI in our infra, we do see it managing more and more of it, but it also requires us to introduce more software, more guardrails and brings us back to the question: “Who watches the watchers?”

The Evolution of Platform Engineering

The concerns around trust and security also echoed at the Platform Engineering Executive Roundtable that I attended. Security and the introduction of the CRA were the main topics of discussion, but of course, the fact that most of the code will be AI-generated by the end of the next year influenced it heavily. One interesting observation I had is that while previously internal delivery platforms were touted mainly for their productivity and software quality benefits - now it seems like compliance and safeguards are taking the front seat. Some roundtable participants even explicitly said that platform engineering isn’t about delivering fast - it’s about delivering in compliance with organizational guidelines. Which, in my experience, is a dangerous statement. Because if the platform becomes a bottleneck, engineers will find a way to hack around the platform. I’ve seen this happen too often in my career.

,

Is FinOps the Blind Spot of Platform Engineering

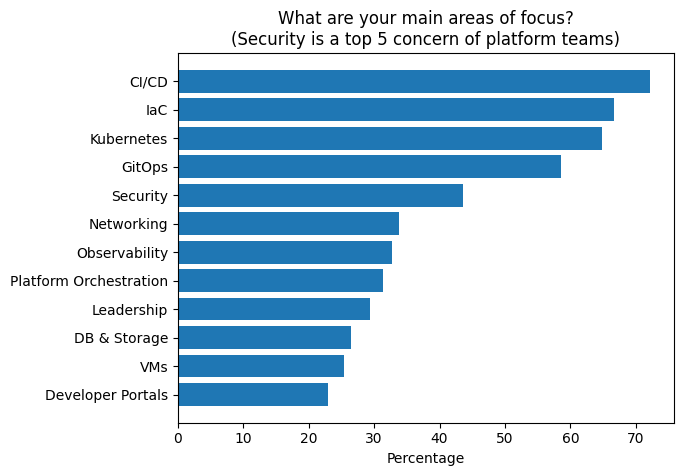

The following chart, presented at the Platform Engineering Executive Roundtable, shows the main areas of focus of platform teams as documented by the platformengineering.org survey from 2025:

As discussed earlier, security is very important - even though not as important as Kubernetes or CI/CD. But the thing that struck me most is that FinOps is not even mentioned.

Optimizing cloud costs is what we do at DoiT (with PerfectScale specifically focused on Kubernetes costs) - so in the last year, I spoke to a number of FinOps professionals about their day-to-day needs and concerns. One notion that I repeatedly heard was that in order for FinOps not to be a catch-up game, FinOps guidelines and observability need to be integrated into the delivery platform. And yet - it looks like platform teams still don’t see this as an area of their focus. On the other hand, this may also be tied to how the survey was done. If FinOps weren’t one of the options to choose from, for example. One things that’s clear to me - with cloud costs only climbing due to GenAI and agentic software - platform teams better put FinOps and cost optimization on their task list - so their platform stays a force multiplier and doesn’t become a cost center.

To Sum it All Up

- KubeCon was a blast, as always.

- Ai is changing our industry, but at a much slower pace than those selling it want us to believe.

- The trust in AI is low, and a lot more guardrails are needed before we let agents run freely in production.

- As genAI evolves, we will need more infrastructure, not less of it - so yes, the job market will change, but I seriously doubt it will shrink. Somebody will still need to supervise the agents and whatever infrastructure they are running on.

And what are your observations from KubeCon?

.png)