Environmental sustainability may not be the first thing that comes to mind when you think about Kubernetes. But finding sustainability within cloud computing offers an effective way to reduce your environmental footprint while also cutting cloud costs.

As the adoption of Kubernetes continues to grow, understanding how this open source orchestration platform can help reduce the environmental carbon (CO2) impact of our digital lives should be top of mind. That said, a great number of companies today do not have a solid grasp on how Kubernetes affects the environment—or even why the world needs more sustainable software.

One big reason is newly adopted regulations. The US and European Union have issued Carbon emissions regulations and now have goals to reduce output by 40% and 55% by 2030 respectively. Also, reporting requirements are real, and companies will be penalized (or incentivized), depending on if they go over or stay under their emission quotas. Building efficient infrastructure has even started showing up in areas like the “Well Architected” framework, where practitioners are now required to report on infrastructure efficiency—which could someday require more specific requirements.

.png)

CO2 in the cloud-first world

The carbon intensity of electricity—or grams of carbon emissions per kilowatt-hour (kWh)—is explained through the amount of CO2 emissions produced per kWh of electricity. However, different forms of energy production have very different carbon intensities, usually measured by finding the grams of carbon per kWh of energy being produced.

Carbon intensity offers a useful way to examine the climate impact of power and how cloud customers are reducing their emissions. Data from the International Energy Agency tells us, the global power source averages 545 grams/kWh. In terms of the cloud, the average AWS power mix carbon intensity is about 393 grams/kWh. When figured this way, it’s easy to see how large-scale cloud providers use a power mix (or combination of various fuels) that is 28% less carbon intensive than the global average.

The study also reveals the fraction of required energy (16%), when combined with the fraction of carbon intensity of power mix (72%), equates to only 12% of the carbon emissions. This math reveals an estimated 88% reduction in the carbon footprint for customers using a managed cloud service like AWS instead of an on-premise data center.

Even though cloud providers such as AWS, Azure, and Google have increased their energy usage with renewables, evolving cloud technologies are demanding more and more compute power—and thus, electricity. Data centers are currently releasing enough greenhouse gasses to be considered direct contributors to climate change, as they can use tens of thousands of hardware and up to 100 megawatts of electricity.

These numbers remind us that adding a pillar of sustainability to general cloud best practices, as well as Kuberntes-based ones, can help organizations measure, manage, improve, and budget properly for their containerized workloads.

The Impact of Kubernetes on CO2

The Kubernetes platform can be considered a sustainability tool, as it allows you to scale resources to meet demand, which minimizes cloud waste. This leads to many companies seeing some carbon footprint reductions when they initially refactor to using Kubernetes.

However, by nature, Kubernetes gives companies the power to innovate and scale with speed and velocity. This results in any CO2 reductions quickly diminishing at the rate at which the application grows due to new code releases and the demand of growing user bases.

Additionally, application resilience and availability are critical to every business. To maintain peak uptime, Kubernetes-based application infrastructures span over multiple availability zones, leading to an increased carbon footprint. Also, many teams lean on over-provisioning as the best mechanism for ensuring the application is up and running properly—which, in turn, needlessly wastes energy.

Even though Kubernetes workloads are bin packed into nodes based on what Kubernetes recognizes as being most efficient, this built-in process does not guarantee savings or carbon reduction. If your Kubernetes environment is over-provisioned, you simply are bin packing wasted resources.

Think about it this way. The average car produces about 404 grams of CO2 per every mile driven. For clusters with hundreds of worker nodes, the common assumption is that at least 30% are wasted, meaning not used and/or could be safely powered off. Powering down just 15 unused servers (Kubernetes worker nodes) is equivalent to 1000 miles (1600 Km) driven—or similar to reducing ~1000Kg (2200 pounds) of CO2 emission, each month.

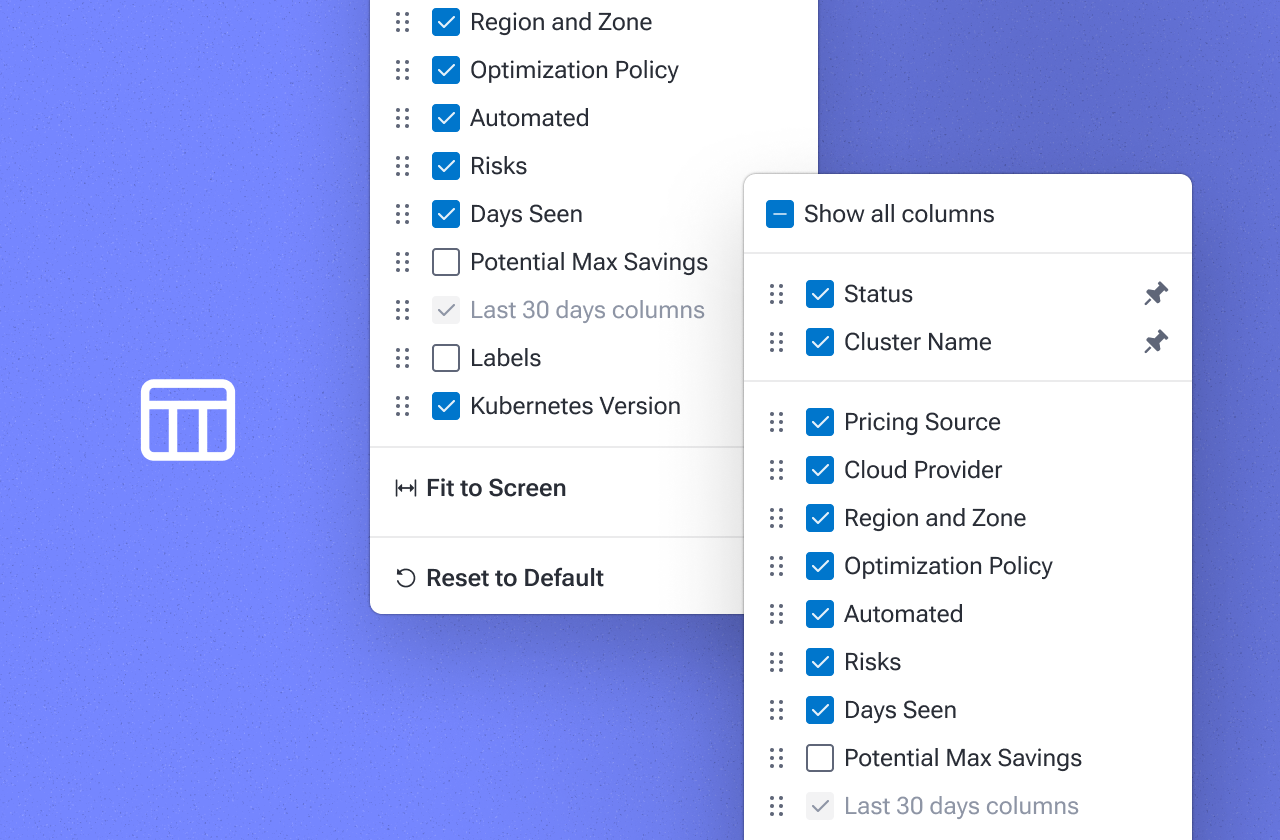

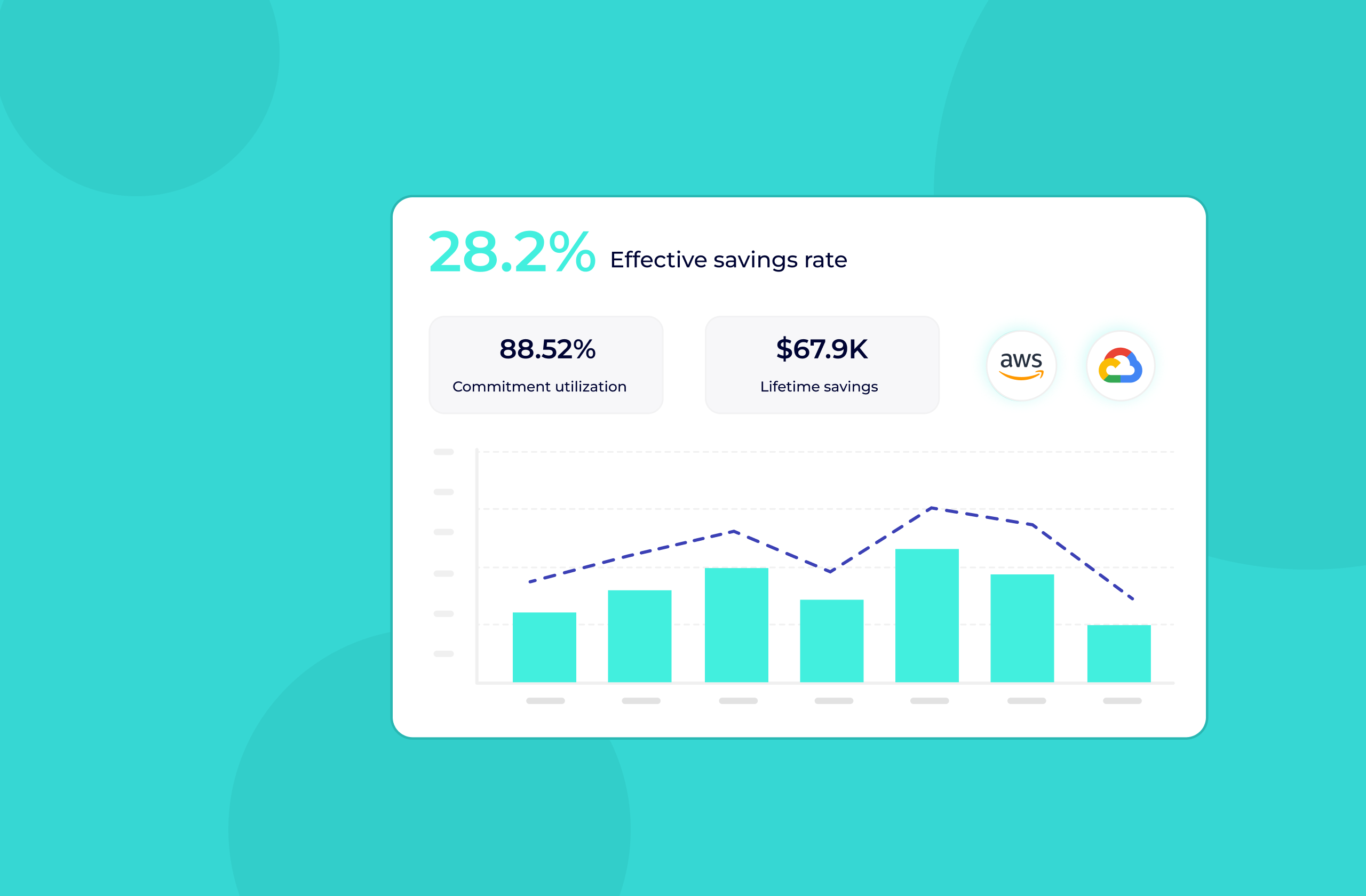

To achieve Kubernetes capacity optimization, practitioners need to understand the critical nature of right-sizing and proper resource provisioning. Proper tooling can help optimize resource provisioning without jeopardizing performance or resilience—helping companies both reduce their costs and carbon footprint.

PerfectScale by DoiT

PerfectScale makes it easy for DevOps and SRE professionals to govern, right-size and scale Kubernetes to continually meet customer demand. By comparing overtime usage patterns with resource configurations we provide actionable recommendations that improve performance, eliminate waste, and reduce carbon emissions. Get the data-driven intelligence needed to ensure peak Kubernetes performance at the lowest possible cost with PerfectScale. Sign up or Book a demo with the PerfectScale team today!

.png)

.png)